The Hidden Infrastructure Powering the AI Boom

In a recent article for Unite.AI, Iceotope's Francesca Cain-Watson explains how the rapid AI boom is straining power, water, and cooling infrastructure, and argues that efficient, sustainable data‑center design—especially precision liquid cooling—is now essential for AI to scale responsibly.

Rising resource strain:

AI workloads are raising compute density, pushing U.S. data centers from about 4.4% of national electricity use in 2023 to a projected 6.7–12% by 2028, amid a forecast 20% power shortfall. Cooling is a major share of this load, and traditional air-based systems become less efficient as more hardware is packed into smaller spaces, turning efficiency from a “nice-to-have” into a core design constraint.

Community and policy pressure:

Local communities and regulators are increasingly focused on the impact of data center energy and water use. In The Dalles, Oregon, Google’s water consumption reportedly grew from 12% of the city’s supply in 2012 to nearly one-third by 2024, triggering public concern and scrutiny. States are considering limits on new data centers, while the federal government urges that AI expansion not raise household electricity prices or strain water resources.

Cooling innovation and holistic design:

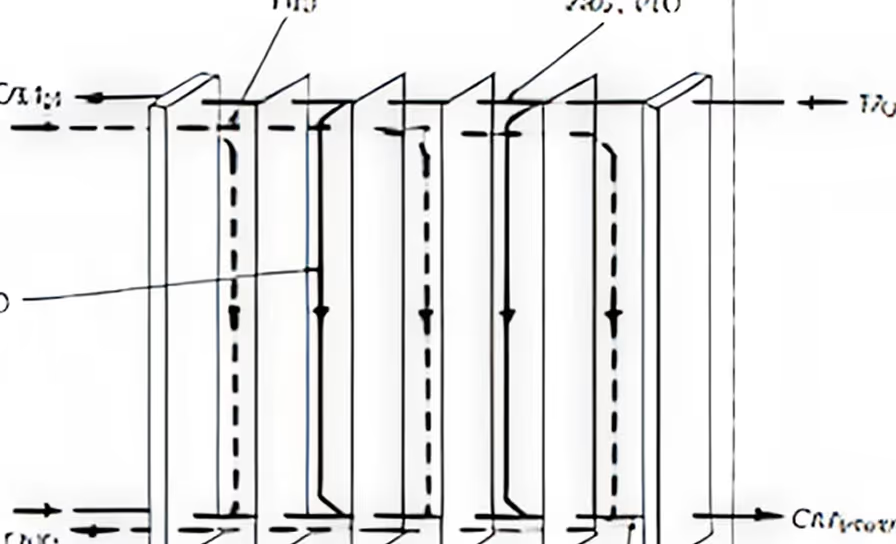

Tech firms like Microsoft and OpenAI are making public “community-first” commitments, but the article argues that durable progress depends on infrastructure-level changes, especially cooling. Precision liquid cooling, which targets heat at components using dielectric fluids, can cut energy use by up to 40% and water use by as much as 96%, while improving reliability and equipment lifespan. Companies can align cost, reliability, and sustainability by adopting advanced thermal management and designing data center infrastructure for a resource-constrained future.

Talk to us about AI infrastructure solutions that are ready for what’s next.