Iceotope Precision

Liquid Cooling Solutions

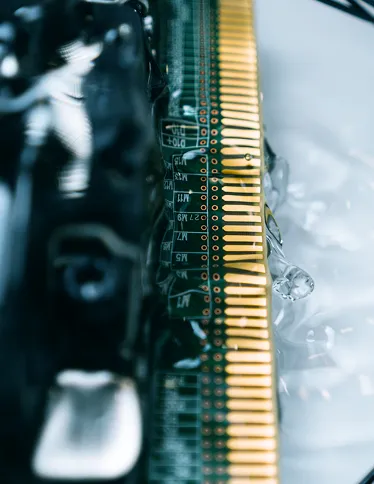

Our Precision Liquid Cooling technology combines the strengths of tank immersion and direct‑to‑chip cooling into a single, rack‑based solution that uses minimal water, cuts carbon emissions, and captures nearly all the heat generated for reuse.

Iceotope solutions direct a small amount of dielectric fluid straight to the hottest system components, eliminating hotspots and keeping CPUs and GPUs within optimal temperature ranges—even for AI and other high‑density workloads.

More reliable. More flexible. More sustainable.

CSPs/Hyperscalers

Cloud service providers (CSPs) and hyperscalers face mounting thermal, energy, and capacity challenges as AI and high‑density workloads push their data centers beyond the limits of legacy air cooling. Iceotope Precision Liquid Cooling directly addresses many of these issues by removing almost all the heat at the source, cutting cooling energy and water use while preserving familiar rack architectures.

Learn more about solutions from Iceotope

AI GPUs and accelerators are driving rack densities from 20–30 kW toward 50–100 kW and beyond, where air cooling becomes inefficient and often uneconomic.

Dense AI workloads create local hotspots and uneven airflow, reducing performance headroom and shortening hardware lifespan.

Many facilities cannot fully utilize power and space because legacy cooling can’t support high‑density AI racks, leaving stranded capacity and slowing AI cluster growth.

By eliminating most fans and air‑handling overhead, Iceotope’s architecture can reduce overall data center energy consumption by up to 40%, significantly lowering the cooling share of the power bill and improving effective PUE/TUE.

Precise delivery of coolant to CPUs, GPUs, and memory keeps temperatures tightly controlled, preventing performance‑sapping hotspots and enabling higher rack densities for AI and HPC clusters without overbuilding mechanical plant.

Unlike tank immersion, Iceotope uses a standard vertical rack form factor compatible with COTS servers and existing layouts, easing integration into current CSP and hyperscale campuses while freeing stranded capacity

Enterprise Security

Enterprise IT teams struggle to secure increasingly complex, distributed environments. Iceotope KUL BOX systems can easily integrate into on‑premise environments, operating in near-silence and without demands on facility water. Iceotope precision liquid cooling lets enterprises keep critical systems and sensitive data inside the organization’s physical and logical perimeter while enabling real-time analytics and threat detection.

Key security challenges for enterprise IT

Multi‑cloud, SaaS, IoT, and remote work mean data and workloads are scattered across third‑party platforms, APIs, and devices, creating more entry points and blind spots.

Regulations now demand clear control over where data is stored and processed, how it moves across borders, and who can access it—harder to prove when everything runs in public cloud.

Centralizing all inspection and analytics in the cloud can add latency and bandwidth overhead, undermining real‑time monitoring and response for OT, IoT, and AI workloads.

Easier compliance with data‑localization and sector‑specific regulations (network segmentation, custom controls, air‑gapping) because data stays on‑site.

On premise compute is ideal for regulated workloads (finance, healthcare, government), highly sensitive IP, and systems requiring strict chain‑of‑custody over data.

On premise systems keep data in‑country or on‑site and can keep operating even if cloud or backbone connectivity is lost, critical for OT, industrial, and safety‑critical environments.

HPC/Artificial Intelligence

HPC and AI are exposing the limits of air‑cooled data centers, leaving power, space, and sustainability value on the table. Iceotope precision liquid cooling is designed to handle current and future processor roadmaps while allowing data centers to safely drive higher utilization on existing compute without overloading room‑level cooling.

Key infrastructure challenges for HPC and AI workloads

Modern GPUs and accelerators can draw over 1–1.5 kW each, driving rack densities from traditional 10–15 kW up to 60–120 kW or more for AI clusters. Conventional air cooling and many legacy facility designs simply can’t keep up.

Dense AI/HPC jobs run at sustained high utilization. When cooling can’t keep up, chips hit thermal limits, clock down, and produce inconsistent performance. Hotspots and uneven airflow worsen the problem.

As AI power climbs, operators face tighter ESG targets on energy efficiency, carbon, and water consumption and pushback from communities on new buildouts.

Easier compliance with data‑localization and sector‑specific regulations (network segmentation, custom controls, air‑gapping) because data stays on‑site.

Easier compliance with data‑localization and sector‑specific regulations (network segmentation, custom controls, air‑gapping) because data stays on‑site.

Easier compliance with data‑localization and sector‑specific regulations (network segmentation, custom controls, air‑gapping) because data stays on‑site.

Ready to talk?