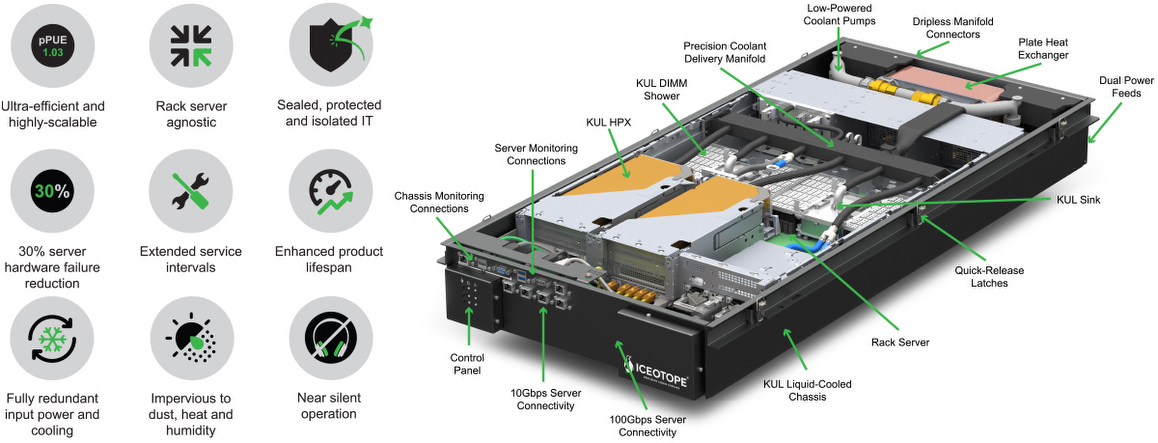

Iceotope Liquid Cooling combines the best attributes of tank immersion and direct-to-chip cooling. Like tank immersion cooling, it offers exceptional sustainability by minimizing water consumption and significantly reducing carbon emissions. This approach recaptures nearly 100% of the heat generated, allowing for efficient reuse and supporting green data center initiatives.

At the same time, Iceotope Liquid Cooling delivers the high performance associated with direct-to-chip cooling. By precisely targeting the hottest components with a small amount of dielectric fluid, it ensures optimal operating temperatures and prevents performance-slowing hotspots. This results in enhanced reliability and efficiency, accommodating the increased compute demands of AI and other high-performance workloads.

Iceotope Liquid Cooling also simplifies the integration process by using the same rack-based architecture as traditional air-cooled systems, making it compatible with existing infrastructure. This blend of sustainability, performance, and compatibility positions Iceotope Liquid Cooling as the most advanced and versatile cooling solution for modern data centers today.

Reduces energy use by up to 40%, carbon emissions by up to 40%, and water consumption by up to 100%.

Accelerate sustainability initiatives

Highly configurable for rapid deployment – from one server to many racks – in any location from the cloud to the edge.

Easily scale distributed workloads

30% lower component failure rate and simplified service calls with on-site hot swap or simple return-to-base swap out.

Significantly reduce maintenance costs

Iceotope Liquid Cooling removes 100% of the heat generated by electronic components across the IT through precise delivery of dielectric fluid. This approach reduces energy use by up to 40% and water consumption by up to 100%.

Iceotope Liquid Cooling is designed to integrate seamlessly into your current infrastructure, making it a versatile solution for modern data centers. Whether you are upgrading your existing systems or building new ones, Iceotope Liquid Cooling offers a sustainable and efficient way to meet the demands of AI and other high-performance computing applications.

For more information about Iceotope Liquid Cooling, please visit our Learning Hub to explore additional resources and classes about the technology, markets and industries Iceotope supports.